OUTPUT

Residency Season: Fall 2018

Catie Cuan’s OUTPUT illuminates how bodies, both human and robotic, are mediated and represented through technology. It centers around a live performance which creates relationships between vestiges of real (human) and captured (technological) bodies.

OUTPUT premiered at Triskelion Arts’ Collaborations in Dance Festival in Brooklyn on September 14, 2018. This performance piece was aided by the development of two new software tools, CONCAT and MOSAIC.

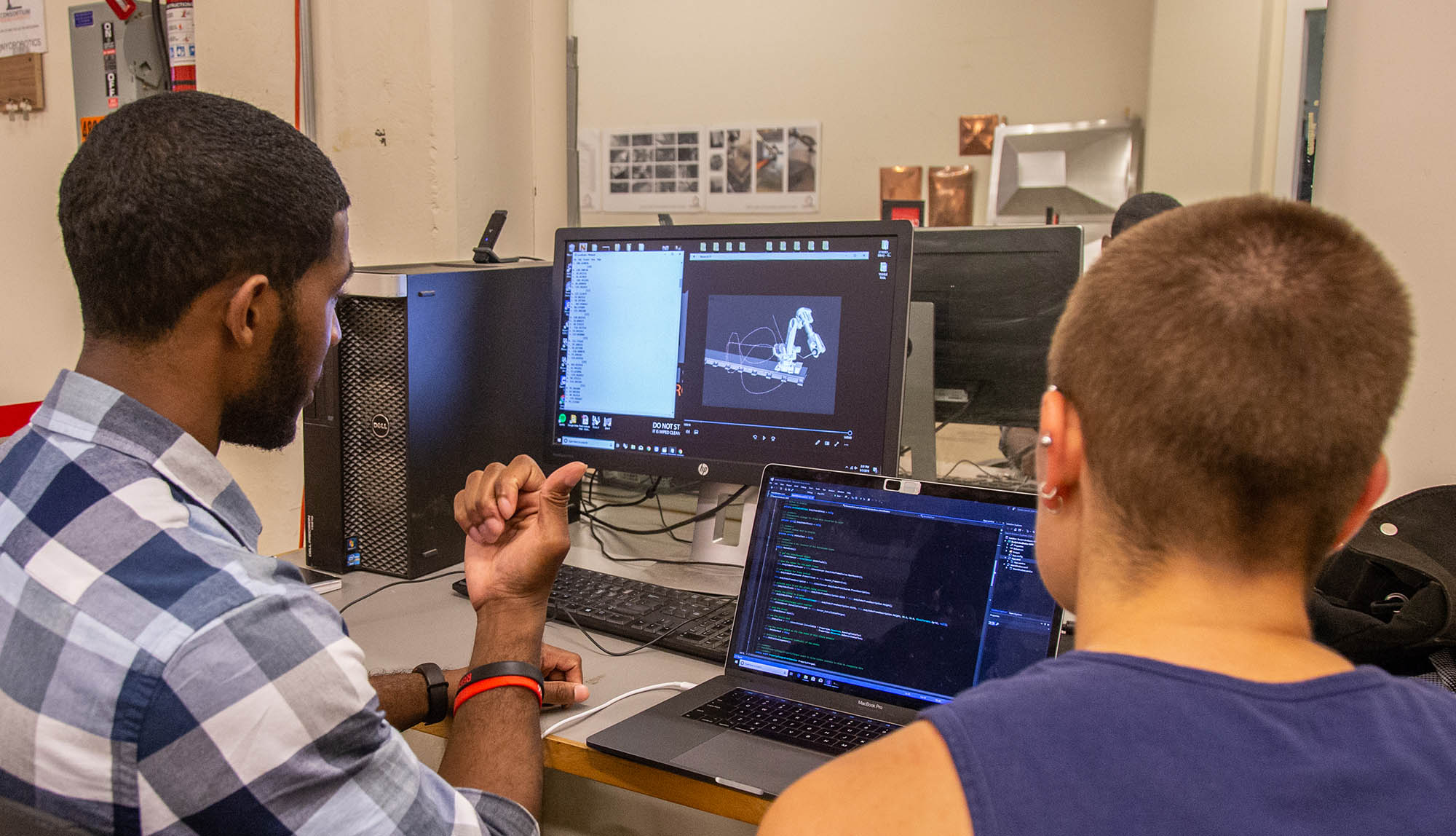

The CONCAT system visualizes a realtime 3D animation of a 15 foot industrial robot arm, nicknamed Wen, moving alongside a live motion capture of a person. This shows the comparison and contrast of a human body next to an automated robot body, akin to a video game matching a live body to its mechanical shadow.

The MOSAIC software used a webcam to generate time-delayed video segments, stacked side by side. The two softwares allow space for improvisation during live performance, creating a link between the human form and the robotic arm.

To create this performance, Catie collaborated with Thoughtworks developers and the Consortium for Research and Robotics, a research center of Pratt Institute in New York.

OUTPUT was featured in the New York Times, on PBS NewsHour in a segment on the future of work, and in an Engadget video feature and article on robotic choreography.

The project was demonstrated at the TED Education Weekend in October, 2018, and exhibited at Pioneer Works Second Sunday in April, 2019, and at the Dance USA Conference in June, 2019.

An academic paper describing the work and its implications was published in the Movement that Shapes Behaviour symposium at the 2019 Artificial Intelligence and Simulation Behaviour Convention, titled “OUTPUT: Translating Robot and Human Movers Across Platforms in a Sequentially Improvised Performance”. The piece is written up in Frontiers in Robotics and AI.