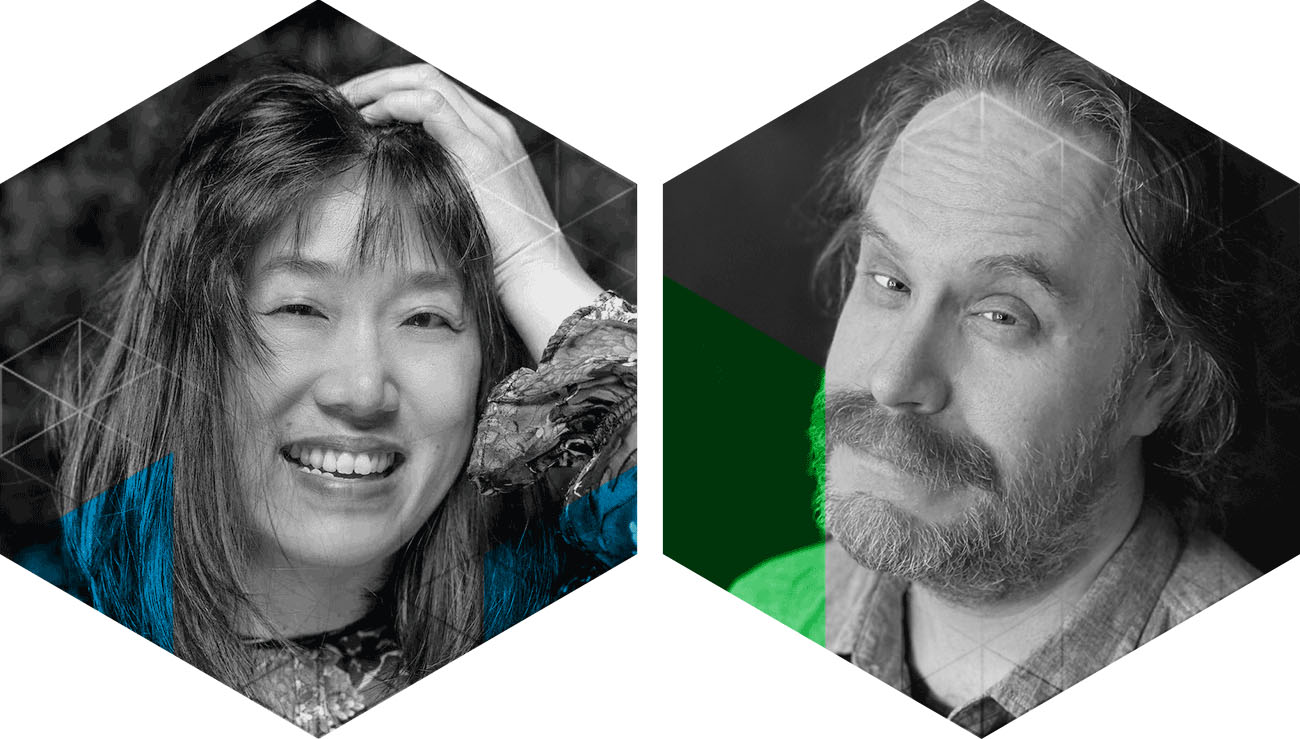

Dilate Ensemble and Sarah Weaver of NowNetArts: In Conversation

Dilate Ensemble joined special guest Sarah Weaver, Director of NowNetArts (NY), for a conversation in the field of network arts. This event was part of the “Improvising the Net(Work)” residency in partnership with CounterPulse.

The conversation focused on Dilate Ensemble’s unique explorations pushing the boundaries of telematic networks resulting in their CATENA performance in January.